Difference between revisions of "GX mipi camera manual"

| Line 353: | Line 353: | ||

The types of operating modes supported may vary depending on the camera model and can be queried using the <code>workmodecap</code> command. | The types of operating modes supported may vary depending on the camera model and can be queried using the <code>workmodecap</code> command. | ||

| + | |||

| + | The working mode is a fundamental camera parameter. Please note that certain features may be restricted depending on the selected mode, as shown in the table below: | ||

| + | |||

| + | ===== Functional Limitations by Working Mode for GX-MIPI-IMX662 ===== | ||

| + | {| class="wikitable" | ||

| + | !Work Mode | ||

| + | !Sensor Mode | ||

| + | !Auto / Manual Exposure | ||

| + | !Slow Shutter (Auto Frame Rate Reduction) | ||

| + | !Flexible FPS Setting | ||

| + | !3D Denoise | ||

| + | |- | ||

| + | |LINEAR Stream Mode | ||

| + | |Master | ||

| + | |Both Supported | ||

| + | |Supported | ||

| + | |Supported | ||

| + | |Supported | ||

| + | |- | ||

| + | |DOL WDR Stream Mode | ||

| + | |Master | ||

| + | |AE Only | ||

| + | |Not Supported | ||

| + | |Only 25/30 FPS | ||

| + | |Supported | ||

| + | |- | ||

| + | |Normal Trigger | ||

| + | |Slave | ||

| + | |ME Only | ||

| + | |Not Supported | ||

| + | |Supported | ||

| + | |Not Supported | ||

| + | |- | ||

| + | |Sync Mode - Master | ||

| + | |Master | ||

| + | |Both Supported | ||

| + | |Not Supported | ||

| + | |Supported | ||

| + | |Supported | ||

| + | |- | ||

| + | |Sync Mode - Slave | ||

| + | |Slave | ||

| + | |Both Supported | ||

| + | |Not Supported | ||

| + | |Not Supported | ||

| + | |Supported | ||

| + | |} | ||

| + | |||

'''Script functions:''' <code>workmodecap</code>, <code>workmode</code> | '''Script functions:''' <code>workmodecap</code>, <code>workmode</code> | ||

| Line 825: | Line 873: | ||

'''Script functions:''' <code>saturation</code>, <code>contrast</code>, <code>hue</code><br /> | '''Script functions:''' <code>saturation</code>, <code>contrast</code>, <code>hue</code><br /> | ||

| − | ==== Istortion Correction ==== | + | ====Istortion Correction==== |

Distortion correction is a technology in image processing used to '''eliminate or reduce optical lens distortion''', aiming to ensure that straight lines in the scene remain “straight” after imaging, and that geometric shapes are closer to their real-world counterparts. This is crucial for applications that require high geometric accuracy, such as '''machine vision, autonomous driving, precision measurement, and AR/VR'''. | Distortion correction is a technology in image processing used to '''eliminate or reduce optical lens distortion''', aiming to ensure that straight lines in the scene remain “straight” after imaging, and that geometric shapes are closer to their real-world counterparts. This is crucial for applications that require high geometric accuracy, such as '''machine vision, autonomous driving, precision measurement, and AR/VR'''. | ||

'''Script Function:''' <code>ldc</code> | '''Script Function:''' <code>ldc</code> | ||

| − | ==== Dehaze ==== | + | ====Dehaze==== |

Dehazing is used to '''eliminate or reduce image degradation caused by atmospheric scattering''', such as fog, haze, smoke, or underwater turbidity, thereby restoring image clarity and contrast. | Dehazing is used to '''eliminate or reduce image degradation caused by atmospheric scattering''', such as fog, haze, smoke, or underwater turbidity, thereby restoring image clarity and contrast. | ||

'''Script Function:''' <code>dehaze</code> | '''Script Function:''' <code>dehaze</code> | ||

| − | ==== Gamma ==== | + | ====Gamma==== |

Gamma is a '''non-linear luminance mapping technique that simulates human visual perception'''. By compressing bright areas while preserving dark details during encoding and restoring natural appearance during display, gamma correction is a key technology for ensuring images look visually correct in digital imaging. | Gamma is a '''non-linear luminance mapping technique that simulates human visual perception'''. By compressing bright areas while preserving dark details during encoding and restoring natural appearance during display, gamma correction is a key technology for ensuring images look visually correct in digital imaging. | ||

| Line 842: | Line 890: | ||

'''Script Function:''' <code>gamma_index</code> | '''Script Function:''' <code>gamma_index</code> | ||

| − | ==== Wide Dynamic Range ==== | + | ====Wide Dynamic Range==== |

'''DOL-type WDR:''' | '''DOL-type WDR:''' | ||

TBD | TBD | ||

| − | ==== Dynamic Range Compression (DRC) ==== | + | ====Dynamic Range Compression (DRC)==== |

Dynamic Range Compression (DRC) aims to '''provide consistent visual perception for both real-world observers and display device viewers'''. The DRC algorithm compresses high-dynamic-range images into the displayable dynamic range while preserving as much original detail and contrast as possible. | Dynamic Range Compression (DRC) aims to '''provide consistent visual perception for both real-world observers and display device viewers'''. The DRC algorithm compresses high-dynamic-range images into the displayable dynamic range while preserving as much original detail and contrast as possible. | ||

'''Script Function:''' <code>drc</code> | '''Script Function:''' <code>drc</code> | ||

| − | === IO Control === | + | ===IO Control=== |

| − | ==== Trigger Delay ==== | + | ====Trigger Delay==== |

Trigger delay controls the '''time interval between receiving an external trigger signal and the actual start of exposure'''. The unit is usually microseconds (μs). This applies to both software and hardware triggers. | Trigger delay controls the '''time interval between receiving an external trigger signal and the actual start of exposure'''. The unit is usually microseconds (μs). This applies to both software and hardware triggers. | ||

'''Script Function:''' <code>trgdelay</code> | '''Script Function:''' <code>trgdelay</code> | ||

| − | ==== Trigger Edge Selection ==== | + | ====Trigger Edge Selection==== |

| − | * '''0: Rising edge trigger''' — exposure starts when the signal transitions from low to high | + | *'''0: Rising edge trigger''' — exposure starts when the signal transitions from low to high |

| − | * '''1: Falling edge trigger''' — exposure starts when the signal transitions from high to low | + | *'''1: Falling edge trigger''' — exposure starts when the signal transitions from high to low |

The selection depends on the logic polarity of your external sensor or controller output, ensuring the camera captures at the precise moment of the physical event. | The selection depends on the logic polarity of your external sensor or controller output, ensuring the camera captures at the precise moment of the physical event. | ||

| Line 868: | Line 916: | ||

'''Script Function:''' <code>trgedge</code> | '''Script Function:''' <code>trgedge</code> | ||

| − | ==== Exposure Delay ==== | + | ====Exposure Delay==== |

Exposure delay is the '''time between the sensor receiving the exposure start command and the actual beginning of exposure''', used to set the advance timing of the trigger signal relative to the actual exposure moment. | Exposure delay is the '''time between the sensor receiving the exposure start command and the actual beginning of exposure''', used to set the advance timing of the trigger signal relative to the actual exposure moment. | ||

'''Script Function:''' <code>trgexp_delay</code> | '''Script Function:''' <code>trgexp_delay</code> | ||

| − | === Revision History === | + | ===Revision History=== |

| − | * '''2026/04/04:''' | + | *'''2026/04/04:''' |

Initial draft largely completed | Initial draft largely completed | ||

| − | * '''2026/03/07:''' | + | *'''2026/03/07:''' |

Completed sections on basic functions, image capture, and image attributes | Completed sections on basic functions, image capture, and image attributes | ||

Revision as of 07:25, 15 April 2026

GX Series MIPI Camera Module Manual

1 Overview

The GX series camera modules feature a high-performance ISP (Image Signal Processor), supporting multiple operating modes and providing extensive configuration options. The products have been thoroughly validated in terms of system stability, manufacturing quality control, and supply capability, making them suitable for embedded vision systems and AI vision applications.

GX series MIPI camera modules use a standard 22-pin FPC user interface, which simplifies system integration. They can be directly connected to a variety of mainstream embedded platforms, such as platforms based on Raspberry Pi Ltd, NVIDIA Jetson series, and the Rockchip RK3588 platform.

This document mainly introduces the functional features and working principles of the GX series products.

For the following topics, please refer to the corresponding dedicated documentation:

- Hardware Interface Manual

- Register Description Document

- Configuration Script Guide

- Driver and User Guides for each embedded platform

In this document, each functional section includes a [Script Function] column. This column lists the relevant commands of the gx_mipi_i2c.sh script to help users configure and debug specific features conveniently.

1.1 Camera Model List

| Series | Model | Max Resolution | Shutter Mode |

|---|---|---|---|

| GX series | GX-MIPI-IMX662 | 1920×1080@60 fps | Rolling Shutter |

| GX series | GX-MIPI-IMX664 | 2688×1520@30 fps | Rolling Shutter |

| GX series | GX-MIPI-AR0234 | 1920×1200@54 fps | Golbal Shutter |

2 Basic Functions

This chapter introduces the basic management functions of the camera, including device information access, parameter management, and system control.

Through these registers, users can obtain device identification information, manage camera configuration parameters, and perform system-level operations.

2.1 Device Information

The camera provides several read-only registers for querying basic device information. These parameters can be used for device identification, system management, and software debugging.

The information includes:

- Manufacturer name

- Product model

- Sensor model

- Device serial number

- Firmware version

2.1.1 Manufacturer Name

The name of the camera manufacturer.

Script Function: manufacturer

2.1.2 Product Model

The model identifier of the camera.

Script Function: model

2.1.3 Sensor Model

The image sensor model used by the camera.

Script Function: sensorname

2.1.4 Serial Number

Each camera is assigned a unique serial number during manufacturing.

The serial number contains information about the production date, batch, and unit number.

Script Function: serialno

2.1.5 Firmware Version

Returns the firmware version information of the camera.

This register is a 32-bit value in the format:

Where:

- AA.BB represents the control firmware version (C version)

- CC.DD represents the logic firmware version (L version)

2.2 Parameter Management

The camera supports saving the current configuration parameters to internal Flash memory.

Saved parameters are automatically loaded when the camera powers on.

2.2.1 Save Parameters

Saves the current camera configuration parameters to Flash.

Notes:

- This operation erases and rewrites the system Flash memory

- The power supply must remain stable during the operation

- Frequent execution of this operation is not recommended

Script Function: paramsave

2.2.2 Restore Factory Parameters

Restores all camera parameters to the factory default configuration.

Notes:

- This operation erases and rewrites the system Flash memory

- The power supply must remain stable during the operation

- Frequent factory resets are not recommended

Script Function: factoryparam

2.3 System Control

The camera provides several basic system control functions, such as device reboot and system uptime query.

2.3.1 System Reboot

Executing this operation will restart the camera.

Script Function: reboot

2.3.2 System Timestamp

The elapsed running time since the camera was powered on.

This parameter can be used for:

- System debugging

- Device uptime statistics

- Simple synchronization reference

Script Function: timestamp

2.3.3 I²C Address Configuration

The camera allows the I²C communication address to be configured through a register.

Users can modify the camera's I²C address according to system requirements to avoid address conflicts in multi-device systems.

Configuration details:

- Configurable address range: 0x08 – 0x77

- After modification, the Save Parameters operation must be executed

- The new address takes effect after the device is rebooted

Script Function: i2caddr

3 Image Acquisition

3.1 Rolling Shutter and Global Shutter

Image sensors can be classified into two types based on their exposure method: rolling shutter and global shutter.

Different exposure methods behave differently when capturing moving objects or operating in triggered modes.

3.1.1 Rolling Shutter

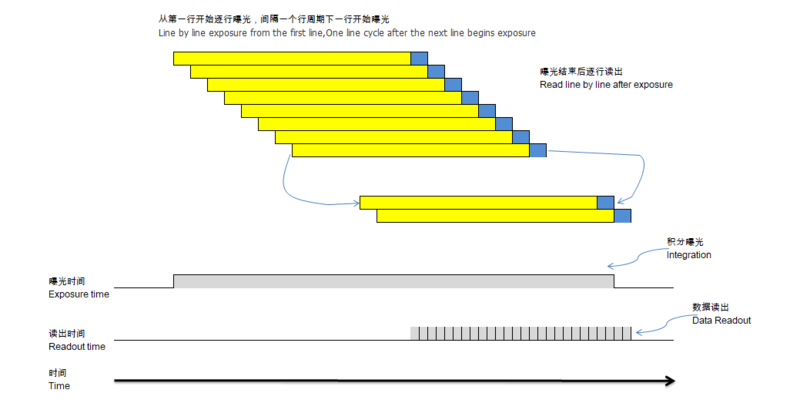

The operation of a rolling shutter sensor is illustrated in the following figure.

In this mode, the rows of the image start exposure sequentially:

- The first row begins exposure first.

- After one row period, the second row starts exposure.

- This process continues, with the Nth row starting exposure immediately after the (N−1)th row.

Once the first row finishes exposure, data readout begins. Reading each row of data takes one row period, including the row blanking time.

When the first row has been read out, the second row begins reading. Subsequent rows are read sequentially until the entire image is output.

Rolling shutter sensors have the following characteristics:

- Relatively simple structure

- Lower cost

- Capable of achieving high resolution

Therefore, rolling shutter is suitable for imaging applications involving static scenes or slowly moving targets.

3.1.2 Global Shutter

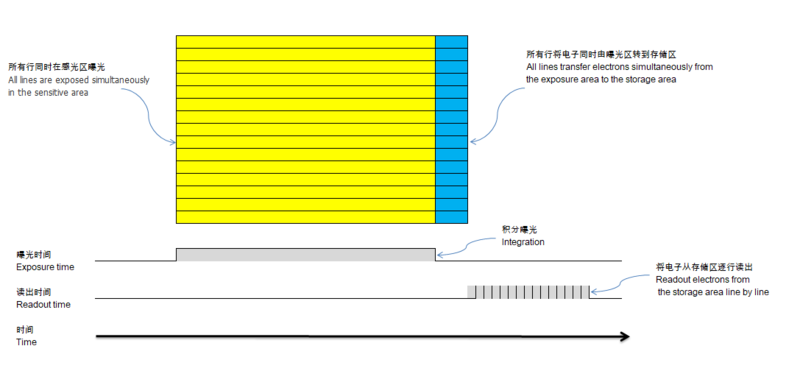

The operation of a global shutter sensor is illustrated in the following figure.

In global shutter mode:

- All pixels on the sensor begin exposure simultaneously.

- All pixels end exposure simultaneously.

After the exposure ends, the sensor transfers the charges from the photosensitive area to the storage area, and then reads out pixel data row by row.

This method ensures that:

- All pixels are sampled at the same time.

- The image is free from motion distortion.

Therefore, global shutter is particularly suitable for capturing fast-moving targets, such as:

- Industrial inspection

- Robotic vision

- Motion tracking

3.2 Start/Stop Acquisition

Users can send a start acquisition or stop acquisition command to the camera at any time.

When the camera receives a start acquisition command:

- If it is currently operating in video streaming mode, the camera will immediately begin exposure and continuously output images.

- If it is currently operating in trigger mode, the camera will enter a waiting for trigger signal state.

At this point, the camera status changes to Running.

When the camera receives a stop acquisition command:

- The camera will first complete the transmission of the current image frame to ensure frame data integrity.

- Then it will stop outputting images.

- The camera status changes to Standby.

Note:

If the current configuration is multi-frame trigger mode and the trigger sequence is not yet complete, the stop acquisition command will interrupt the ongoing trigger process. Therefore, stopping acquisition only guarantees the integrity of the current frame, but does not guarantee the integrity of the entire trigger sequence.

In general, the driver automatically sends start/stop acquisition commands, so users do not need to manually control the registers.

Script function: imgacq

3.3 Video Mode

The GX series cameras support multiple video modes, with each mode corresponding to a specific resolution, maximum frame rate, and image readout method.

Readout methods include Normal Mode, Binning Mode, and Subsampling Mode. In video streaming mode, the camera continuously exposes images and outputs image data in real time according to the resolution, frame rate, and readout method of the selected video mode.

The supported image modes may vary depending on the camera model. Details are provided in the table below:

| Model | videomode | Resolution | Minimum Frame Rate | Maximum Frame Rate | Readout Method |

|---|---|---|---|---|---|

| GX-MIPI-IMX662 | 1 | 1920×1080 | 0.065fps | 60fps | Normal |

| GX-MIPI-IMX664 | 1 | 2688×1520 | TBD | 30fps | Normal |

| 2 | 1344x760 | TBD | 100fps | Binning | |

| GX-MIPI-AR0234 | 1 | 1920×1200 | TBD | 54 fps | Normal |

| 2 | 1920×1080 | TBD | 60fps | Normal | |

| 3 | 1600×1200 | TBD | 60fps | Normal |

Script function:videomodecap,videomodenum, vidoemodewh1-vidoemodewh8,videomodeparam1-videomodeparam8,videomode,fps,maxfps, curwh.

3.3.1 Pixel Readout Modes

The GX series cameras support multiple pixel readout modes: Normal Mode, Binning Mode, and Subsampling Mode. Each mode has different characteristics in terms of resolution, signal-to-noise ratio (SNR), and data bandwidth, and can be selected according to application requirements.

- Normal Mode

The sensor reads out the full pixel array row by row, with every pixel participating in acquisition and output. This mode provides the highest resolution and best image detail, but requires higher data bandwidth.

- Binning Mode

Adjacent pixels are combined internally on the sensor before output, improving SNR and low-light performance while reducing resolution and data bandwidth. This mode is suitable for low-light or high-sensitivity applications.

- Subsampling Mode

Pixels are read out at fixed intervals, reducing resolution and data volume while keeping SNR largely unchanged. This mode is suitable for scenarios that require higher frame rates or lower bandwidth.

Mode Comparison

| Mode | Readout Method | Resolution | SNR | Data Bandwidth |

|---|---|---|---|---|

| Normal | Full Pixel Readout | High | Baseline | High |

| Binning | Pixel Binning | Medium | Improved | Low |

| Subsampling | Interval | Medium | Largely Unchanged | Low |

3.3.2 Current Image Parameters

The camera provides a set of registers that allow accurate reading of the current image width, height, and readout mode during secondary development or debugging.

Script functions: curwh, readmode

3.3.2.1 Pixel Format

The camera’s output format complies with the MIPI CSI-2 standard. Currently supported pixel formats are UYVY and YUYV, with UYVY as the default.

Script function: pixelformat

3.3.3 Frame Rate Configuration

3.3.3.1 Frame Rate Range

The camera provides the minimum and maximum frame rates supported in the current operating mode, which can be read via read-only registers.

Script functions: minfps, maxfps

3.3.3.2 Setting the Frame Rate

Within the range of minimum and maximum frame rates, the current frame rate can be freely set. Note that the frame rate must be configured while acquisition is stopped (Standby state) to ensure the setting takes effect.

Script function: fps

3.3.3.3 Frame Count Statistics

The camera supports statistics for both sensor output frames and camera output frames. The frame count is reset each time acquisition is started.

Script function: framecount

3.4 Work Modes

The camera may support multiple work modes, including Video Stream Mode, Normal Trigger Mode, Level Trigger Mode, and Multi-Camera Synchronization Mode.

The types of operating modes supported may vary depending on the camera model and can be queried using the workmodecap command.

The working mode is a fundamental camera parameter. Please note that certain features may be restricted depending on the selected mode, as shown in the table below:

3.4.1 Functional Limitations by Working Mode for GX-MIPI-IMX662

| Work Mode | Sensor Mode | Auto / Manual Exposure | Slow Shutter (Auto Frame Rate Reduction) | Flexible FPS Setting | 3D Denoise |

|---|---|---|---|---|---|

| LINEAR Stream Mode | Master | Both Supported | Supported | Supported | Supported |

| DOL WDR Stream Mode | Master | AE Only | Not Supported | Only 25/30 FPS | Supported |

| Normal Trigger | Slave | ME Only | Not Supported | Supported | Not Supported |

| Sync Mode - Master | Master | Both Supported | Not Supported | Supported | Supported |

| Sync Mode - Slave | Slave | Both Supported | Not Supported | Not Supported | Supported |

Script functions: workmodecap, workmode

3.4.2 Switching Work Modes

Operating modes cannot be switched directly while the camera is running.

The following sequence must be followed:

- Send a stop acquisition command.

- Wait for the camera to enter Standby state.

- Modify the operating mode configuration.

- Start acquisition again.

Script function: workmode

3.4.3 Video Stream Mode

In video stream mode, the camera continuously exposes and outputs image data according to the configured video mode (videomode) and frame rate (fps).

In general, the driver automatically sets the registers based on the application layer’s configuration for video mode and frame rate, so users do not need to manually operate the registers.

Script functions: videomode, fps, maxfps

3.4.4 Normal Trigger Mode

3.4.4.1 Rolling Shutter

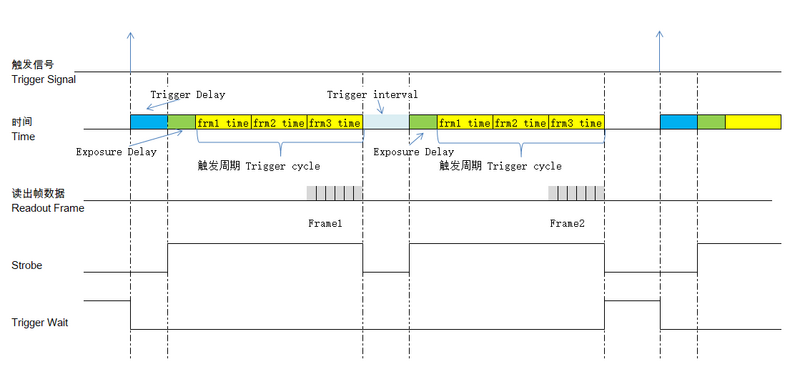

Applicable models: GX-MIPI-IMX662, GX-MIPI-IMX664

To ensure consistency of triggered images, the generation of a complete image frame (from exposure to output) requires three consecutive image cycles. Therefore, the maximum frame rate in video stream mode for these models is only one-third of the selected video mode frame rate.

These three consecutive image cycles are defined as a Trigger Cycle.

In normal trigger mode, if multiple frames are output per trigger, the trigger delay starts from the current trigger signal, while the trigger interval and exposure delay take effect before each trigger cycle begins, ensuring consistent image output for every cycle.

The figure below shows an example with trigger frame count set to 2:

Script functions: trgnum, trginterval, trgsrc, trgexp_delay, trgdelay, triggercyclemin

3.4.4.2 Global Shutter (Smartsens Type)

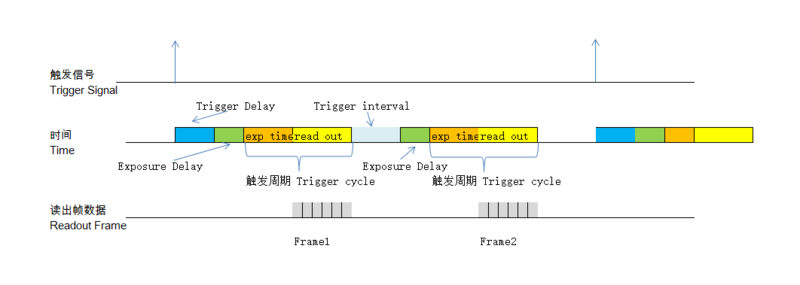

Applicable models: GX-MIPI-AR0234

For Global Shutter sensors of this type, the acquisition of one frame consists of two stages:

- Exposure Stage

- Readout Stage

The next frame’s exposure can only start after readout is complete.

Thus, in trigger mode, a Trigger Cycle consists of one exposure period + one readout period.

Because the exposure time affects the total duration of the trigger cycle, the maximum frame rate in trigger mode is usually lower than that in video stream mode.

The figure below shows an example with trigger frame count set to 2:

Script functions: trgnum, trginterval, trgsrc, trgexp_delay, trgdelay, triggercyclemin

3.4.4.3 Trigger Source

The camera supports two types of trigger sources: software trigger and hardware trigger. The only difference between them is the source of the trigger signal. Configurations and functions such as trigger delay, exposure delay, trigger frame count, and trigger interval are identical for both sources.

Note: Trigger source settings are only effective in Normal Trigger Mode and Rolling Shutter Multi-Frame Trigger Mode.

Script function: trgsrc

- Software Trigger

A software trigger is initiated by writing 1 to the corresponding register of the camera via the I²C bus.

Due to software processing and I²C transmission delays, the responsiveness of a software trigger is lower than that of a hardware trigger. For applications requiring high timing accuracy, hardware trigger is recommended.

Script function: trgone

- Hardware Trigger

In hardware trigger mode, the camera detects trigger signals via the TrigIN IO level changes. For details, see the IO Control section.

Script function: trgedge

3.4.4.4 Trigger Statistics

The trigger statistics function tracks the total number of triggers and the number of lost triggers.

- Definition of total triggers:

- In hardware trigger mode: the number of triggers after trigger filtering.

- In software trigger mode: all triggers are counted.

If the camera receives a hardware or software trigger signal while it is already within a trigger cycle, it cannot respond to the new signal, causing trigger loss.

Switching the operating mode or trigger source does not automatically clear these statistics.

Script functions: trgcount, trgclr

3.4.5 Synchronization Mode

Applicable models: GX-MIPI-IMX662, GX-MIPI-IMX664

In synchronization mode, one camera module is configured as Master, and one or more modules are configured as Slave. The Master module outputs the XVS signal, which the Slave modules receive to synchronize exposure and data output with the Master.

When using synchronization mode, ensure that videomode, workmode, and fps are identical across all cameras.

In this mode, each camera module’s exposure parameters can be configured as either Manual or Auto.

Script function: slavemode

4 Image Properties

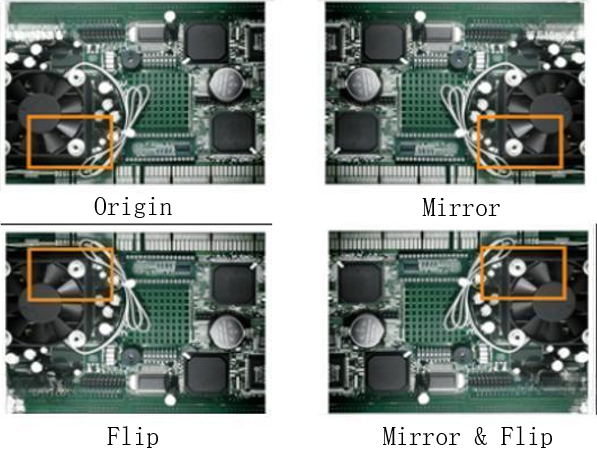

4.1 Image Orientation

Used to set or read the output orientation of the camera image.

Values:

- 0 — Normal

- 1 — Mirror (horizontal flip)

- 2 — Flip (vertical flip)

- 3 — Flip & Mirror (horizontal + vertical flip)

Script function: imgdir

4.2 Day/Night Mode and ICR (IR-CUT) Control

This feature is used in surveillance scenarios. Typically, day/night mode, infrared (IR) illuminators, and ICR work together to achieve clear, color images during the day and high-contrast, discreet images at night.

| Mode | Image | IR Illuminator | ICR |

|---|---|---|---|

| Day Mode | Color | Off | IR Filter |

| Night Mode | B/W | On | Glass |

Among these three, the camera only controls day/night mode and ICR status, not the IR illuminator.

Typically, the IR illuminator board supports day/night detection and outputs a level signal to the camera to trigger day/night mode switching. The GX series cameras use J2-1 pin to receive this signal.

4.2.1 Day/Night Mode Values:

- 0: Color mode

- 1: B/W mode

- 2: External trigger mode

In modes 0 and 1, day/night mode is controlled via software registers, ignoring the J2-1 signal. In mode 2, the J2-1 pin level determines day/night mode.

Script function: daynightmode

4.2.2 Trigger Pin Polarity

Configures the polarity of the J2-1 pin. By default, high = day, low = night. Setting this value to 1 reverses the polarity (high = night, low = day).

Script function: pinpolarity

4.2.3 ICR Control

ICR (IR-CUT) usually has two control pins to switch between IR filter and clear glass in front of the sensor, controlling light filtering.

The camera supports the ircutdir parameter to adapt to different ICR pin configurations.

J4: IRCUT Function Table

| Mode | Pin | Name | Polarity | Filter Position (ircutdir=0) | Filter Position (ircutdir=1) |

|---|---|---|---|---|---|

| 1 | J4-1 | IRCUT1 | - | IR Cut | Full Spectrum Pass |

| J4-2 | IRCUT2 | + | |||

| 2 | J4-1 | IRCUT1 | + | Full Spectrum Pass | IR Cut |

| J4-2 | IRCUT2 | - |

The camera supports periodic ICR control:

- Enabled: outputs a control signal at a default cycle. Suitable for unstable or high-vibration scenarios but slightly increases overall power consumption.

- Disabled: outputs control signal only during mode switching. Suitable for stable, low-vibration environments.

Script functions: ircutdir, ircuttimer

4.3 MIPI Signal Parameters

4.3.1 Number of MIPI Lanes

Reads the current number of MIPI lanes. This is read-only. Default: 2 lanes.

Script function: lanenum

4.3.2 MIPI Lane Data Rate

Reads the current MIPI lane data rate. This is read-only. Default: 1188 Mbps.

Script function: mipidatarate

4.4 Test Image

4.4.1 Vertical Color Stripes

Script function: testimg

5 ISP functions

5.1 Exposure & Gain Control

GX series cameras support independent control of Exposure Time (Shutter) and Gain. Aperture (Iris) control is not supported in the current version.

The system provides two exposure control modes:

- Auto Exposure (AE)

- Manual Exposure

5.1.1 Exposure Mode

Used to configure the exposure control method of the camera.

- Auto Mode:The camera automatically adjusts exposure parameters based on ambient lighting conditions. Suitable for general-purpose applications.

- Manual Mode:The user explicitly sets exposure time and gain. Suitable for stable lighting environments or scenarios such as external trigger operation.

Script Function: expmode

5.1.2 Current Exposure Time

Indicates the actual exposure time applied to the current frame. This is a real-time feedback parameter.

- In Auto Exposure mode: dynamically calculated by the AE algorithm

- In Manual mode: equal to the user-defined value

This parameter reflects the actual sensor integration time of the current image.

Script Function: exptime

5.1.3 Current Gain

Indicates the actual gain applied to the current frame. This is a real-time representation of signal amplification.

- In Auto mode: dynamically adjusted by the AE algorithm

- In Manual mode: equal to the user-defined value

Unit: dB (decibels)

Script Function: curgain

5.1.4 Auto Exposure (AE)

In Auto Exposure mode, the camera dynamically adjusts exposure time and/or gain according to ambient brightness to achieve a target image brightness.

Within the configured exposure time and gain limits, the AE algorithm prioritizes increasing exposure time over gain to improve image brightness, thereby minimizing noise.

This mode is suitable for environments with varying illumination (e.g., indoor/outdoor transitions, natural lighting conditions).

5.1.4.1 AE Target

Defines the target brightness level for Auto Exposure. It serves as the convergence reference for the AE algorithm.

- Higher value: brighter image

- Lower value: darker image

This parameter directly affects image appearance and brightness stability.

Script Function: aetarget

5.1.4.2 AE Strategy

Defines how the AE algorithm balances exposure parameters under different conditions.

- Highlight Priority:More sensitive to bright regions; minimizes overexposure whenever possible.

- Low-light Priority:More sensitive to dark regions; enhances visibility in low-light areas, even at the risk of overexposure.

The default strategy is Highlight Priority. Users can adjust it according to application requirements.

Script Function: aestrategy

5.1.4.3 Max Exposure Time in AE

Defines the upper limit of exposure time allowed in Auto Exposure mode.

Purpose:

- Prevent motion blur caused by excessive exposure time

- Avoid frame rate reduction or instability due to long exposure

Note: Typically, the following condition should be satisfied: Exposure Time ≤ 1 / Frame Rate

Script Function: aemaxtime

5.1.4.4 Max Gain in AE

Defines the upper limit of gain allowed in Auto Exposure mode.

Purpose:

- Prevent excessive image noise caused by high gain

- Avoid detail loss and color distortion

Unit: dB

Script Function: aemaxgain

5.1.4.5 Anti-Flicker

A key camera feature designed to eliminate interference caused by flickering from artificial light sources. By synchronizing the exposure time with the AC power line frequency, it prevents artifacts such as brightness banding, flickering, or rolling dark bands when shooting under fluorescent or similar lighting.

In Auto Exposure (AE) mode, the line frequency parameter (50 Hz or 60 Hz) is used to suppress flicker from AC-powered light sources. The camera constrains the exposure time to integer multiples of the flicker period, ensuring stable image brightness without rolling dark bands.

After enabling this feature, the camera exposure time follows integer multiples of 2×freq1 seconds to avoid visible banding. It is recommended to use larger multiples under stronger lighting to prevent overexposure.

Script function: antiflicker

5.1.4.6 Slow Shutter

Slow Shutter is an extended strategy of Auto Exposure (AE), designed to prioritize image brightness and signal-to-noise ratio (SNR) under low-light conditions.

In this mode, AE operates according to the following logic:

- Exposure time is increased with priority to improve image brightness while minimizing gain, thereby reducing noise;

- When the exposure time reaches the limit allowed by the current frame rate, and the gain has already reached the user-defined maximum (

aemaxgain): - The system will gradually reduce the frame rate to allow further extension of the exposure time;

- This process continues until the exposure time reaches the maximum allowed value defined by AE (

aemaxtime).

The Slow Shutter mode effectively reduces image noise and improves brightness and overall image quality in low-light environments.

Note: This mode is only effective when Auto Exposure (AE) and video streaming mode are enabled simultaneously. Proper configuration of the maximum exposure time (

aemaxtime) is required when using this feature.

In contrast, Fixed Frame Rate mode maintains a constant frame rate during AE adjustment.

Script function: slowshutter

5.1.5 Manual Exposure

In Manual Exposure mode, exposure time and gain are fixed by the user, and no automatic adjustment is performed.

Advantages:

- High frame-to-frame brightness consistency (no flicker)

- Precise control over motion blur

- Suitable for industrial inspection, synchronized triggering, AI vision, and similar applications

Disadvantages:

- Not adaptive to lighting changes, which may result in overexposure or underexposure

- Requires stable lighting conditions or manual parameter tuning

5.1.5.1 Manual Exposure Time

Defines the exposure time explicitly set by the user.

Due to hardware limitations, the effective exposure time of most image sensors is not truly continuous at microsecond precision. Instead, it is typically quantized in units of line time (row readout time). Therefore, slight differences between metime and exptime are expected and considered normal.

Script Function: metime

5.1.5.2 Manual Gain

Defines the gain value explicitly set by the user.

Different sensors may support different gain ranges and step resolutions. As a result, slight differences between mgain and curgain are expected and considered normal.

Script Function: mgain

5.2 White Balance

In real-world scenes, the perceived color of objects varies under different lighting conditions, and the image sensor faithfully records these color variations caused by differences in light sources.

However, the human visual system can identify the true color of objects based on memory and contextual cues.

The function of the Auto White Balance (AWB) algorithm is to minimize the influence of external light sources on the perceived color, converting the captured color information into a neutral representation, as if observed under ideal daylight illumination.

5.2.1 White Balance Modes

The GX series cameras support White Balance (WB) adjustment to correct color deviations under varying lighting conditions, ensuring accurate color reproduction in images.

The system provides two white balance control modes:

- Auto White Balance (AWB)

The camera automatically adjusts the gain of each color channel (R/G/B) based on the scene illumination. This mode is suitable for general-purpose scenarios with varying lighting conditions.

- Manual White Balance (MWB)

Users explicitly set the gain of each color channel. This mode is suitable for environments with stable lighting or applications requiring fixed color reproduction.

Script function: wbmode

5.2.2 Current Status

5.2.2.1 Current Color Temperature

Indicates the color temperature of the light source currently used or estimated by the camera, expressed in Kelvin (K). It reflects the system’s assessment or setting of the scene’s “warmth or coolness.”

- Higher color temperature (e.g., 8000 K): light is bluer, resulting in a cooler image tone.

- Lower color temperature (e.g., 2500 K): light is redder, resulting in a warmer image tone.

Script function: colortemp

5.2.2.2 Current Red Gain

Represents the gain applied to the red (R) channel in the current imaging state to achieve white balance. Gain amplifies the sensor’s output signal and adjusts the intensity of individual color components.

- In AWB mode: dynamically calculated by the AWB algorithm

- In MWB mode: equal to the user-set red gain

Red gain is one of the core parameters in white balance control and, together with blue gain, determines image tone and overall color temperature.

Script function: currgain

5.2.2.3 Current Blue Gain

Represents the gain applied to the blue (B) channel in the current imaging state to achieve white balance. Used in conjunction with red gain, it determines color balance and the perceived color temperature of the image.

- In AWB mode: dynamically calculated by the AWB algorithm

- In MWB mode: equal to the user-set blue gain

The ratio of blue gain to red gain controls the cool/warm appearance and overall white balance of the image.

Script function: curbgain

5.2.3 Auto White Balance (AWB)

In AWB mode, the camera dynamically adjusts the R, G, and B channel gains according to different lighting conditions and color temperatures, ensuring accurate reproduction of white or neutral gray objects.

- Goal: White or gray objects appear neutral white, and overall image colors are natural and true-to-life.

- Algorithm: AWB uses the estimated Color Temperature and scene illumination to adjust channel gains dynamically, prioritizing low noise and natural color reproduction.

- Adjustable parameters: Users can set allowed color temperature ranges to limit algorithm adjustments under extreme lighting, preventing excessive color shifts.

Script functions:

awbcolortempmin— minimum allowed color temperature for AWBawbcolortempmax— maximum allowed color temperature for AWB

Note: Color temperature is expressed in Kelvin (K), typically ranging from 2500 K (warm light) to 8000 K (cool light).

5.2.4 Manual White Balance (MWB)

Manual white balance allows users to actively set the gain of each color channel under specific lighting conditions to precisely control image colors.

- Goal: White or neutral gray objects appear truly neutral, without yellow, blue, or green color cast.

- Usage scenarios: Suitable for controlled lighting or specialized environments, such as laboratory lighting, industrial inspection, and color calibration.

- Parameter details: Users can directly set Red Gain (R Gain) and Blue Gain (B Gain). The green channel is typically used as a reference and is not directly adjusted.

- Advantages: Eliminates potential color shifts, flicker, or misjudgment caused by AWB, maintaining highly consistent and stable colors.

Script functions:

mwbrgain— manually set red channel gainmwbbgain— manually set blue channel gain

5.3 Sharpen

The Sharpen module is used to enhance image clarity by adjusting edge sharpness and improving the visibility of details and textures. In addition, it provides control over sharpening artifacts such as overshoot (bright halos/white edges) and undershoot (dark halos/black edges), while also suppressing noise amplification caused by sharpening.

The camera incorporates an internal sharpening mechanism that adapts parameters automatically based on scene conditions, system gain, and other factors. A user-adjustable sharpening strength parameter is exposed to allow fine-tuning of the visual effect.

Script function: sharppen

5.4 Noise Reduction

Noise reduction is divided into 2D Noise Reduction (2D NR) and 3D Noise Reduction (3D NR).

The camera integrates an internal noise reduction control mechanism that automatically adjusts parameters based on scene conditions, system gain, and other factors. A user-configurable noise reduction strength parameter is provided for flexible tuning of the visual effect.

5.4.1 2D Noise Reduction

2D noise reduction operates on a single frame. It reduces noise by analyzing the relationship between each pixel and its neighboring pixels within the same image.

Script function: denoise2d

5.4.2 3D Noise Reduction

3D noise reduction, also known as temporal-spatial noise reduction, extends 2D NR by incorporating the time dimension. It utilizes correlations between consecutive frames to reduce noise more effectively.

This approach is particularly suitable for video sequences, as it considers both spatial information within a frame and temporal information across frames. Typical applications include video surveillance and video production.

Disabling 3D noise reduction can reduce one-frame latency.

Script function: denoise3d

5.5 Image Color Parameters

At the later stage of the ISP pipeline, the camera provides controls for adjusting key image color parameters, including saturation, contrast, and hue.

These parameters allow users to fine-tune the overall color appearance and visual style of the image.

Script functions: saturation, contrast, hue

5.6 Istortion Correction

Distortion correction is a technology in image processing used to eliminate or reduce optical lens distortion, aiming to ensure that straight lines in the scene remain “straight” after imaging, and that geometric shapes are closer to their real-world counterparts. This is crucial for applications that require high geometric accuracy, such as machine vision, autonomous driving, precision measurement, and AR/VR.

Script Function: ldc

5.7 Dehaze

Dehazing is used to eliminate or reduce image degradation caused by atmospheric scattering, such as fog, haze, smoke, or underwater turbidity, thereby restoring image clarity and contrast.

Script Function: dehaze

5.8 Gamma

Gamma is a non-linear luminance mapping technique that simulates human visual perception. By compressing bright areas while preserving dark details during encoding and restoring natural appearance during display, gamma correction is a key technology for ensuring images look visually correct in digital imaging.

The GX series provides multiple pre-tuned gamma parameter sets for different visual effects, allowing adjustment according to actual scene requirements.

Script Function: gamma_index

5.9 Wide Dynamic Range

DOL-type WDR:

TBD

5.10 Dynamic Range Compression (DRC)

Dynamic Range Compression (DRC) aims to provide consistent visual perception for both real-world observers and display device viewers. The DRC algorithm compresses high-dynamic-range images into the displayable dynamic range while preserving as much original detail and contrast as possible.

Script Function: drc

6 IO Control

6.1 Trigger Delay

Trigger delay controls the time interval between receiving an external trigger signal and the actual start of exposure. The unit is usually microseconds (μs). This applies to both software and hardware triggers.

Script Function: trgdelay

6.2 Trigger Edge Selection

- 0: Rising edge trigger — exposure starts when the signal transitions from low to high

- 1: Falling edge trigger — exposure starts when the signal transitions from high to low

The selection depends on the logic polarity of your external sensor or controller output, ensuring the camera captures at the precise moment of the physical event.

Script Function: trgedge

6.3 Exposure Delay

Exposure delay is the time between the sensor receiving the exposure start command and the actual beginning of exposure, used to set the advance timing of the trigger signal relative to the actual exposure moment.

Script Function: trgexp_delay

7 Revision History

- 2026/04/04:

Initial draft largely completed

- 2026/03/07:

Completed sections on basic functions, image capture, and image attributes